Connection Servers are one of the most important components in any Horizon environment, and they come in two flavors – the standard connection server and the replica connection server.

Connection Servers handle multiple roles in the Horizon infrastructure. They handle primary user authentication against Active Directory, management of desktop pools, provide a portal to access desktop pools and published applications, and broker connections to desktops, shared hosted desktops, and published applications.

When you run the Horizon installer, you will see two connection server types – the standard connection server and the replica connection server. The Standard and Replica Connection Servers have the same feature set and perform identically after installation has completed. The only difference between the two is that the standard connection server is the first server installed in the pod and initializes a new environment while a replica server copies data from a server in an existing environment.

Note: When you run the Connection Server installer, you will see a third role – the TrueSSO Enrollment Server role. This role is used to enable TrueSSO, which provides passwordless authentication to desktops in conjunction with VMware Workspace ONE Access. That role will not be covered at this time.

In previous versions of Horizon, there was another connection server role – the Security Server. The Security Server is a stripped down version of the regular Connection Server designed to provide secure remote access. The Security Server was discontinued in Horizon 8, and it has been replaced by the Unified Access Gateway.

Architecture and Concepts

Before we go through the steps to deploy a Horizon Connection Server, let’s cover some basic architecture and concepts around Connection Servers.

VMware uses the “pod and block” concept to describe the basic Horizon architecture. A pod is a cluster of Horizon connection servers. Pods can scale out to 7 connection servers supporting up to 10,000 user sessions. Horizon uses Microsoft Active Directory Lightweight Directory Services (AD LDS, formerly ADAM) as it’s primary datastore. The AD LDS instance contains all of the common configuration for the Horizon pod, such as vCenter information, Instant Clone AD provisioning accounts, and pool configuration and entitlements. Each connection server in the pod has a local copy of the AD LDS instance.

All connection servers in the pod must be in the same physical datacenter, and Cloud Pod Architecture must be used if a multi-site deployments are required. VMware does not support Horizon deployments where connection servers in the same pod are spread across sites. This includes sites where active-active storage technologies are used, including technologies like VPLEX and Stretched VSAN. If active-active storage technologies are used, the Horizon management components must all be pinned to one site. They can manage desktops that are deployed into the other site, but all of the connection servers must be located together.

There are two reasons for this – the AD LDS instance mentioned above and the message bus technology used for communications between the connection servers. AD LDS can be prone to split-brain and USN rollback issues in the event of a network or server outage, especially if a server is operating on it’s own or restored from a backup or snapshot. These can prevent servers from replicating data and resolving replication conflicts when two connection server have different , which will impact the stability and operation of the environment.

Horizon uses Java Message Bus for communications between the desktops, and this requires extremely low latency (sub-millisecond) to ensure that all connection servers are in sync. If the connection servers are not in sync, then there may be errors where two users may have the same floating desktop assigned which will lead to errors. Simon Long has a great blog post explaining this in more detail.

In the Horizon block and pod architecture, blocks are the vSphere resources that desktops and RDSH servers will run on. A block is a vCenter servers for management and one or more ESXi hosts or clusters for running virtual machines. A Horizon deployment can have one or more resource blocks. The management components can along side desktop or RDSH resources, but this is not recommended for most deployments.

Note: While the Horizon connection servers must be located together in the same site, the desktop resources do not need to be located in the same site as the Connection Servers.

Installing the First Connection Server

Before you can begin installing the Horizon Connection Server, you will need to have a server prepared that meets the minimum requirements. The basic requirements, which are described in Part 2, are a server running Server 2012 R2 or later with 2 CPUs and at least 4GB of RAM.

Note: If you are going have more than 50 virtual desktop sessions on a Connection Server, it should be provisioned with at least 10GB of RAM.

Once the server is provisioned, and the Connection Server installer has been copied over, the steps for configuring the first Connection Server are:

1. Launch the Connection Server installation wizard by double-clicking on VMware-viewconnectionserver-x86_64-8.x.x-xxxxxxx.exe.

2. Click Next on the first screen to continue.

3. Accept the license agreement and click Next to continue.

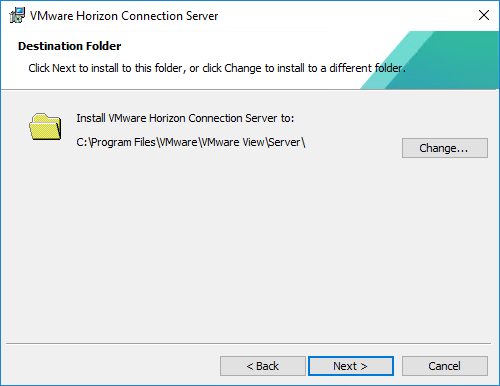

4. If required, change the location where the Connection Server files will be installed and click Next.

5. Select the type of Connection Server that you’ll be installing. For this section, we’ll select the Horizon Standard Server. If you plan on allowing access to desktops through an HTML5 compatible web browser, make sure that “Install HTML Access” is installed. This option should be enabled by default. Select the IP protocol that will be used to configure the Horizon environment. Click Next to continue.

If you are installing a standard server to create a new pod, please skip to step 6. If this is a replica server to expand an existing pod, please see Step 5a.

5a – Applies only to Replica Servers. If you are installing a Horizon Replica Server, you will be prompted to provide the IP address or host name of another server in the pod. This is the server that the AD LDS instance will be replicated from.

6. Enter a strong password for data recovery. This will be used if you need to restore the Connection Server’s LDAP database from backup. Make sure you store this password in a secure place. You can also enter a password reminder or hint, but this is not required.

7. Horizon requires a number of ports to be opened on the local Windows Server firewall, and the installer will prompt you to configure these ports as part of the installation. Select the “Configure Windows Firewall Automatically” to have this done as part of the installation.

Note: Disabling the Windows Firewall is not recommended.

8. The installer will prompt you to select the default Horizon environment administrator. The options that can be selected are the local server Administrator group, which will grant administrator privileges to all local admins on the server, or to select a specific domain user or group. The option you select will depend on your environment, your security policies, and/or other requirements.

If you plan to use a specific domain user or group, select the “Authorize a specific domain user or domain group” option and enter the user or group name in the “domainname\usergroupname” format.

Note: If you plan to use a custom domain group as the default Horizon administrator group, make sure you create it and allow it to replicate before you start the installation.

9. Chose whether you want to participate in the User Experience Improvement program. If you do not wish to participate, just click Next to continue.

10. If you are installing into an SDDC on a public cloud, such as VMware Cloud on AWS, select the cloud service from the drop down box. Otherwise, select General. Click Install to begin the installation.

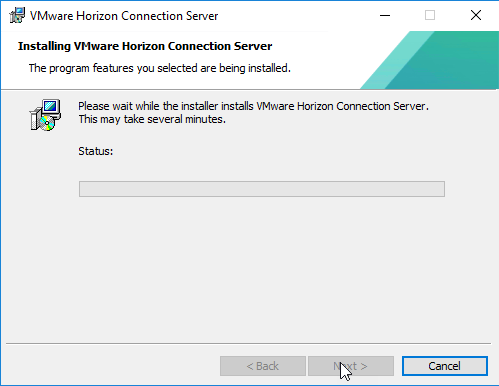

11. The installer will install and configure the application and any additional windows roles or features that are needed to support Horizon.

12. The installer will wait for the Horizon web apps to start.

13. Once the install completes, click Finish. You may be prompted to reboot the server after the installation completes.

Configuring the Locked.Properties file

Before you sign into Horizon Administrator to configure the environment or allowing users to connect to a new connection server in an existing pod, a configuration change needs to be made.

Horizon is configured to perform origin checking on all incoming connections. This can prevent users from accessing the HTML5 client and admin interface through a load balanced URL or the Unified Access Gateway. This KB explains the issue in more detail.

In order to properly configure Horizon, we will need to create a file called locked.properties on the connection server. The locked.properties file is a simple text file, and it needs to be created in C:\Program Files\VMware\VMware View\Server\sslgateway\conf.

The steps for configuring HTML client access when using load balanced connection servers can be found in the VMware documentation here, and the steps for configuring HTML client access when using the Unified Access Gateway can be found here. Depending on your environment, one or both of the articles may apply, and these changes must be applied to each connection server in the pod.

The connection server services will need to be restarted after the changes have been made.

Origin checking is not the only thing the locked.properties file is used for. This file is used to control the behavior of the web server used with Horizon. You can learn more about the Cross-Origin Resource Sharing options used in the locked.properties file here.

Note: The locked.properties file is also used for configuring HTTP settings, Smart Card Access, and other features of the web server used by Horizon. Please see the Horizon documentation at docs.vmware.com for more details.

Now that the Connection Server is installed, it’s time to begin configuring the Horizon application so the Connection Server can communicate with vCenter as well as setting up any required license keys and the events database. Those steps will be covered in the next part.

You must be logged in to post a comment.